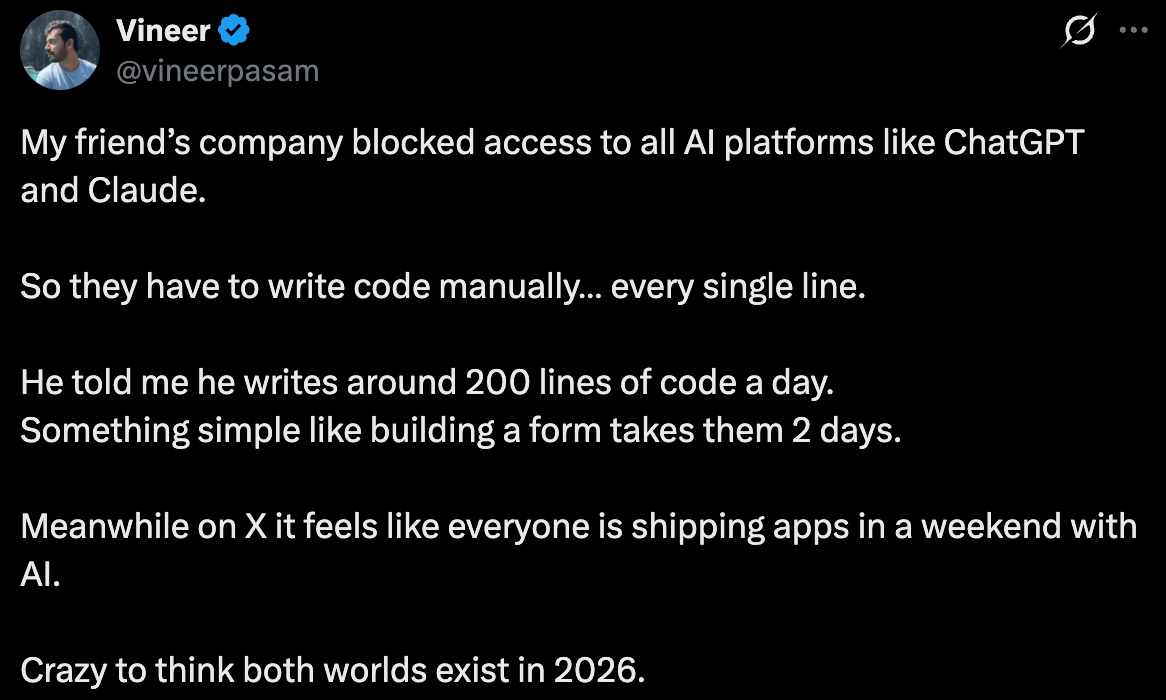

Parallel Worlds in 2026

We have entered a wrinkle in time where the past and future coexist together.

One of my good friends decided with his girlfriend that “Olly and AI are a match made in hell,” and that AI is the perfect tool to feed my obsessive personality and/or delusions.

I love this characterization. I think AI Olly is the alter ego I needed to balance Outside Olly who runs eats chocolate and sleeps in alleyways.

This week, we return to the question, is AI all hype? I still consistently see memes comparing Claude to Slot Machines or saying that the Senior Engineer is the one who can hit the “Enter” key the fastest.

AI is Not Hype

One thing happening is that the people who are calling AI hype are using the free versions and the people who have maximized AI are paying the most. At this point, I can perfectly understand why someone would pay for two Claude Max subscriptions per month and still hit the quota for each.

AI is incredibly powerful, and for those who have figured out how to leverage it, it’s worth paying more. Even for $200/month, people are still getting a bargain for now. Eventually, we will probably all have to pay a per token price that more closely mirrors real costs of AI (hundreds to thousands of dollars per month).

Thousands of dollars per month you say? Yes. AI is smart, and can do big work. If you have stuff to do on a computer or that a personal assistant could do, AI can do it for you. No questions asked. But it takes AI time, and you have to pay, and you have to teach the AI how to do it. Kind of like a human? you ask. “Yes! Like a human.”

Aside: Will AI write my blogs? No, please enjoy my typos. I do think I can write better blogs with AI to help research and bounce ideas against.

What does it feel like to make software with AI?

I like the way Boris Cherny talked about it on VergeCast. He doesn’t write any code (neither do I). He only writes instructions for Claude. But, as he describes, in many ways it doesn’t feel that much different from programming. Software engineers have always had to learn and adapt to ever-changing tech stacks.

AI is just another tech stack to learn in many ways. In other ways, this is a bigger change than the others. We no longer write computer code and often don’t even read computer code.

Some of the world’s greatest software engineers are the ones who hate AI the most. They say, “I write much better code than AI.” They are correct, but who cares? What happens to the best engineer on a team? They get promoted, and then they have to manage engineers who write worse code than them. The idea is not that AI will do something better or worse but to take a step back, stop meddling in the details, and define an end goal well so AI can hit it.

There is still a strong element of flow to programming with AI. The engineers who love AI the most are the ones who play it like an instrument. They built their own relationships with AI, became fascinated by it, explored it, and learned its capabilities and limitations through direct interaction.

Some engineers claim they have become so efficient with AI that their limiting factor is typing speed. The guy who invented OpenClaw does most of his programming by dictating to an AI. He actually lost his voice from programming.

I cosplay as an engineer limited by typing speed. AI programming does feel like playing an instrument to me though. Or even downhill skiing. It is full flow, all-absorbing, nonstop, and I can either totally crash out or have the most cruisey, delightful experience in the world.

Yesterday, I got so excited that I had to force the laptop closed to go to bed. Today I totally crashed out when all my agents failed and left me to think about how to fix four mistakes on four different tasks at the same time. But even today, I will view today’s expensive mistakes as an equally valuable lesson (is that how it works?), and focus on broader learnings that will I hope solve all four mistakes at the same time tomorrow.

Parallel Workflows

Two weeks ago, I had two agents working on separate apps at the same time. Today, I had four agents working on the same app at the same time because I set up “isolated workflows”.

The complexity compounds with parallel workflows, and with that, the opportunities for making big mistakes. It’s pretty exhilarating to get more AI’s working at the same time though, kind of like skiing down a double black. My underlying principle has been: As long as I’m taking the time to sit behind a computer, I can either work alone, with a partner, or with a whole team, so go with a team.

The difference is most evident in the AI bill I’m racking up. In one day today, I used as many credits as I used in the entire month of February. Did I get as much done today as I did in the entire month of February? Nope, not even close.

The jury’s still out on whether or not I’m throwing money down the toilet (or lighting barrels of oil on fire). I’m either learning how to compose like Beethoven or allowing AI to feed my delusions of becoming Beethoven.

My obsession has been on meta-work: teaching an AI how to do my job, rather than delivering new software for our company. Today, my boss had to remind me that my job is to actually release new apps with fixes that resolve problems for other people. (For that reason, this next week will put practice to the test to see if I can deliver Beethoven or bust.)

How did I get so side-tracked?

My latest idea is to get my workflow to a point where I can bring my job to the Andes with me.

That would require me to coach Claude to release new apps itself with my permission. I would text back and forth with Claude, review Claude’s software designs, answer any implementation questions that Claude has, test Claude’s new apps, and authorize Claude to publish app releases to production, all from my phone, never touching a laptop. I believe that reality is within reach, but easier said than done.

Before going to the Andes, I need to streamline app deployments with a full CI CD pipeline, write great instructions for Claude to follow, and then do lots of practice runs with Claude.

Boris Cherny said that the future of programming is that programming will die, or turn into art. The people who program in the way we picture it today will be the ones who love doing it as a passion, but not for a profession.

Nonetheless, the most valuable programmers were never really just programming. They have always had to coordinate and compromise between different parties, figure out what’s most important to do in the face of infinitely many options, and deliver solutions.